MIT Develops AI System That Can Predict Which Scientific Studies Will Be Retracted Before They're Even Published

Researchers at MIT's Computer Science and Artificial Intelligence Laboratory have created an AI model that analyzes scientific manuscripts and predict...

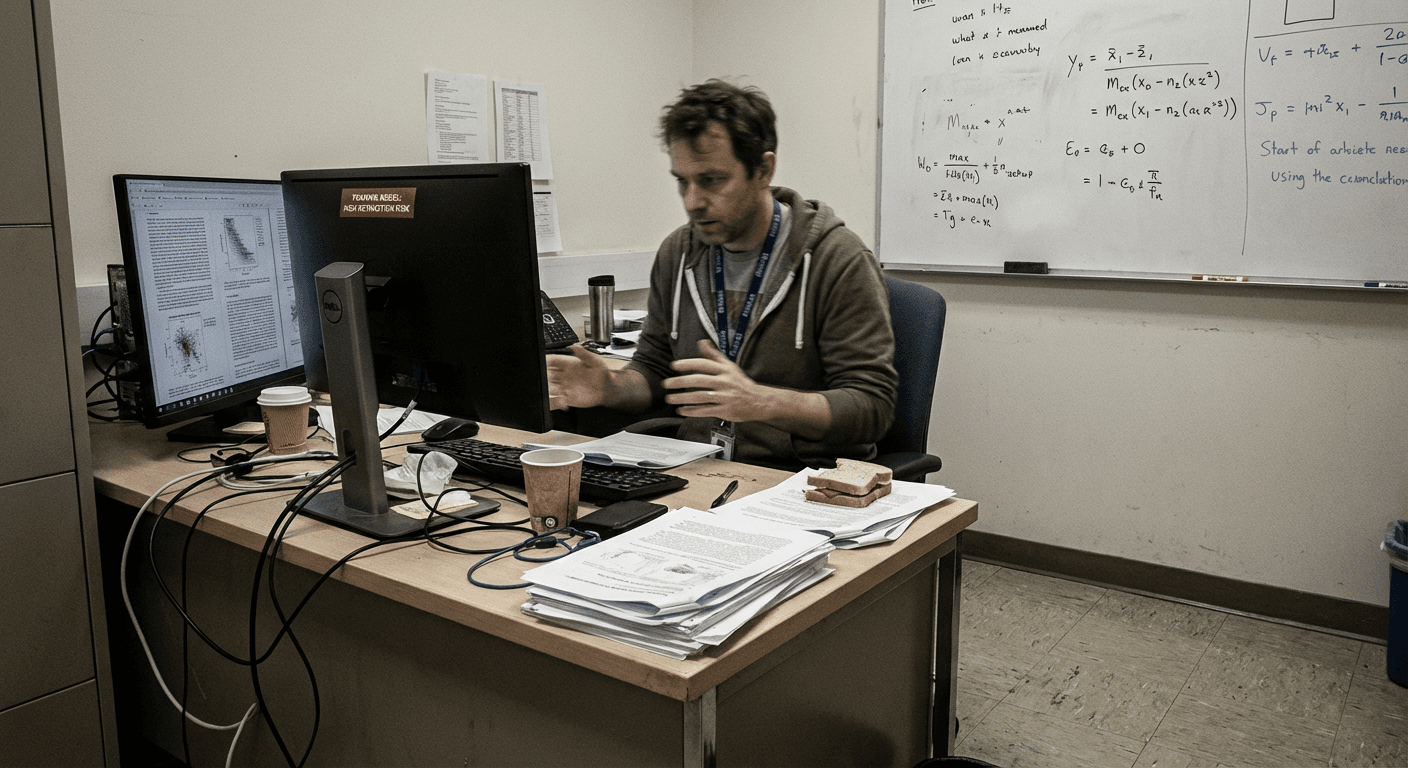

Researchers at MIT's Computer Science and Artificial Intelligence Laboratory have created an AI model that analyzes scientific manuscripts and predicts with 84% accuracy which papers will be retracted for data fabrication, methodology errors, or irreproducible results within three years of publication, according to findings published in the journal Nature Machine Intelligence.

The system, dubbed "Academic Integrity Detection Engine" (AIDE), uses natural language processing to identify subtle patterns in scientific writing that correlate with later retractions, including specific phrasing around statistical significance, unusual data presentation formats, and what lead researcher Dr. Elena Vasquez describes as "optimistic certainty markers" that typically indicate flawed experimental design.

AIDE was trained on a dataset of over 40,000 peer-reviewed papers published between 2010 and 2020, including 3,847 studies that were subsequently retracted. The AI identified recurring linguistic and methodological patterns in retracted papers, such as excessive use of phrases like "clearly demonstrates," "unambiguously shows," and "definitively proves" when presenting marginal statistical results.

"The irony is not lost on us that we're using artificial intelligence to detect artificial intelligence in human research," noted Dr. Vasquez, whose own study underwent extensive self-validation to avoid recursive prediction paradoxes. "Though our model did flag this paper for an 73% retraction probability, which we're choosing to interpret as algorithmic humility."

The system has already been quietly deployed by three major academic publishers as part of their editorial review process, successfully identifying 127 problematic manuscripts before publication. However, the AI has also flagged several legitimate studies for "excessive statistical conservatism" and "insufficiently confident conclusions," suggesting the algorithm may be optimizing for academic overconfidence rather than scientific rigor.

Dr. Michael Thompson, a biostatistician at Stanford who was not involved in the research, expressed concern that the system might incentivize researchers to game their writing style rather than improve their actual methodology. "We're essentially teaching scientists to lie more convincingly to machines," Thompson observed.

The National Science Foundation has allocated $2.3 million in funding to expand AIDE's capabilities to predict which research grants will produce meaningful results, though early testing suggests the system primarily recommends funding studies about AI systems predicting research outcomes.

Advertisement

Support The Synthetic Daily by visiting our sponsors.